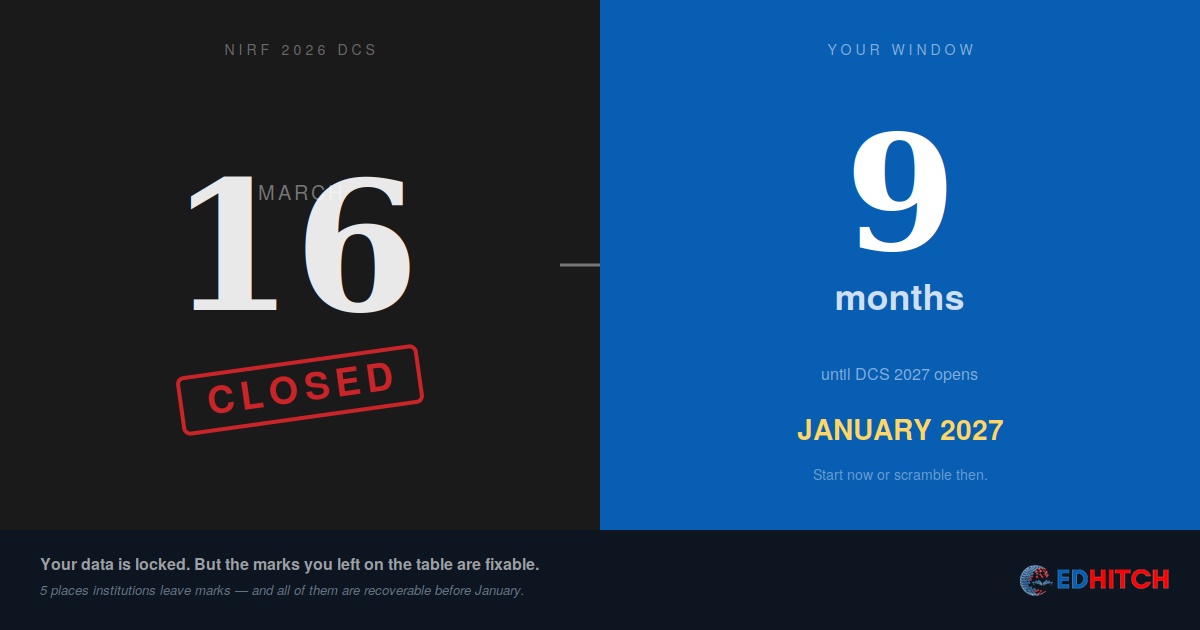

The NIRF 2026 Data Capture System closed on March 16. Your data is locked. Rankings will arrive between July and August. Nothing you do now changes your NIRF 2026 outcome.

Most institutions will spend the next four months waiting. A few will spend it analysing what they just submitted — and discovering that the marks they left on the table had nothing to do with institutional quality.

Your submission is a mirror. Most institutions don't look.

Every DCS submission contains two kinds of information. The first is the data itself — student numbers, faculty counts, publications, placements, expenditure. The second, which almost nobody examines, is what the data reveals about how well your institution translates its reality into NIRF's scoring framework.

An institution that placed 74% of graduates but scored poorly on GO-GPH (worth 40 marks in Engineering) probably used its own placement definition instead of NIRF's. NIRF's GPH parameter includes placement, higher studies, and entrepreneurship. Most placement cells track only the first. The institution's placement rate is real. The NIRF score doesn't reflect it because the data wasn't translated into NIRF's language.

This isn't a quality problem. It's a data translation problem. And it's the single largest source of recoverable marks in the NIRF system.

The institutions that climb don't have better outcomes than the institutions that stagnate. They have better data discipline. The outcomes are often identical — the translation is different.

The 5 places institutions leave marks on the table

1. Scopus affiliations — RP-PU (35 marks in Engineering)

NIRF pulls publication data from Scopus and Web of Science. But a paper only counts for your institution if the author's Scopus profile lists your institution as their affiliation. Faculty who joined from another institution often carry their old affiliation for years. Faculty who publish under department names instead of the official institutional name create duplicate profiles.

The fix takes 30 minutes per faculty member — updating the Scopus Author Profile with the correct institutional affiliation. But most institutions don't do it because they don't know it's a problem until the score arrives. By then, the DCS has closed.

What to do now: Search your institution on Scopus. Compare the paper count Scopus shows against what your DCS submission claims. If there's a gap, you have affiliation mismatches. Fix them before January 2027.

2. Patent grants — RP-IPR (15 marks in Engineering)

NIRF's IPR parameter weights granted patents significantly higher than filed patents. A patent filed in 2022 that was granted in 2025 should be reported as granted — but only if someone checked. Most NIRF in-charges submit the number of patents filed and move on. They don't track which filed patents have since been granted.

What to do now: Pull your patent filing records. Check the Indian Patent Office database for every patent filed more than 18 months ago. Update your records with granted patent numbers. This data feeds directly into DCS 2027.

3. Financial Resources — TLR-FRU (30 marks in Engineering)

FRU measures capital expenditure (excluding new building construction) and operational expenditure per student. The exclusion of new building construction is specified in NIRF's formula — but many institutions report total capital expenditure including construction. This inflates the numerator, which sounds good until you realise NIRF's verification may correct it downward.

The subtler problem: institutions that grew student intake without proportionally growing expenditure see their per-student ratios worsen. Spending ₹50 crore doesn't help if student count grew 25% and expenditure grew only 15%.

What to do now: Recalculate your FRU using NIRF's formula — separating construction from other capital expenditure. Compare per-student figures against the three-year average. If the trend is declining despite institutional growth, the problem is denominator management.

4. Graduate Outcomes definitions — GO-GPH (40 marks)

NIRF's GPH combines placement, higher studies, and entrepreneurship. Most placement cells report only placement. An institution with 70% placement, 12% going to higher studies, and 3% in entrepreneurship has a GPH-eligible rate of 85% — but if only placement was reported, NIRF sees 70%.

The gap between institutional placement rate and NIRF GO score is almost always a definition mismatch, not a quality gap. The students were placed. The data didn't say so in NIRF's terms.

5. SDG-tagged publications — RP (new sub-parameter)

NIRF added Publications related to Sustainable Development Goals (PSDGs) as a scoring component. Scopus automatically tags publications against the 17 UN SDGs using AI classification. Most institutions haven't even checked how many of their publications carry SDG tags. The Scopus SDG filter takes 30 minutes to run and export. Submitting the result to the NIRF portal is straightforward. This is likely the most under-claimed sub-parameter in all of NIRF.

What to do now: Run the Scopus SDG filter for your institution. Export the tagged publications. Note the count. This becomes a verified input for DCS 2027.

The 9-month window most institutions waste

DCS 2027 will open in January 2027. That's roughly nine months from now. Most institutions will do nothing until December 2026, then scramble to fill the portal in two weeks.

The institutions that actually move up in NIRF don't start in December. They start in April — right now — with a systematic review of what they just submitted, where the gaps are, and what can be fixed in nine months.

The improvements that matter aren't the ones that take years. They're the ones that take weeks: correcting Scopus affiliations, updating patent records, reclassifying financial data, expanding the GO definition, running the SDG export. These are data hygiene tasks, not institutional transformation projects. They recover marks that were always yours — you just didn't claim them.

The 9-month window between DCS closing and DCS reopening is where rank movement happens. Not by becoming a better institution — by becoming a more accurate one.

What your NIRF 2026 submission is already telling you

If you have a copy of your DCS submission (and you should — if you don't, that itself is a governance problem), you can run a self-diagnostic right now. For each parameter, ask one question:

TLR: Did we report faculty correctly — regular full-time only, no visiting or contractual? Did FRU exclude new building construction?

RP: Does our Scopus institution profile match our DCS publication count? Did we check for granted patents? Did we submit SDG-tagged publications?

GO: Did our placement data include higher studies and entrepreneurship — or just placement? Did we use NIRF's definition of "graduated in minimum stipulated time" for GUE?

OI: Did we report all students receiving full tuition fee reimbursement — from government, institutional funds, and private bodies? Did we include women faculty percentage alongside women student percentage?

Every "no" answer is a mark left on the table. And every one of them is fixable before January 2027.

Want to know exactly what your submission left on the table?

Our NIRF Diagnostic is a four-week, parameter-by-parameter analysis of your DCS submission against NIRF's scoring methodology. We identify recoverable marks, data translation gaps, and a 12-month improvement roadmap for NIRF 2027.

Learn About the Diagnostic →Frequently Asked Questions

When did NIRF 2026 DCS close?

March 16, 2026. No further submissions or corrections accepted after the deadline.

When will NIRF 2026 rankings be announced?

Typically between July and September. Based on past patterns, NIRF 2026 results are expected between July and August 2026.

Can institutions change data after DCS closes?

No. Institution-reported data is locked. NIRF may provide a verification window for third-party data (Scopus/WoS/Derwent) disputes, but self-reported data cannot be changed.

What is the biggest source of NIRF score leakage?

Data translation gaps — the difference between institutional reality and what the NIRF portal captured. Uncorrected Scopus affiliations, unreported patent grants, FRU misclassification, and narrow GO definitions account for 5-15 marks in most institutions.

How can institutions improve for NIRF 2027?

Start now. Analyse your 2026 submission, fix Scopus affiliations, check patent grant status, reclassify FRU expenditure, expand GO definitions, and run the SDG export. These are data hygiene tasks — weeks, not years.

Related Reading

- We Improved Everything. Our NIRF Rank Dropped. What Happened?

- The Institution Was Strong. The Data Was Weak.

- ₹50 Crore Spent. NIRF Rank Didn't Move. Why?

- 82% Placement. Low NIRF GO Score. Why?

- NAAC vs NIRF vs NBA: 68% Overlaps — One Strategy, Not Three

- One Nation, One Data — Why NAAC, NBA and NIRF Will Cross-Check Everything

Edhitch

Accreditation & Ranking Intelligence · NAAC · NBA · NIRF · 12 Years · 100+ Institutions