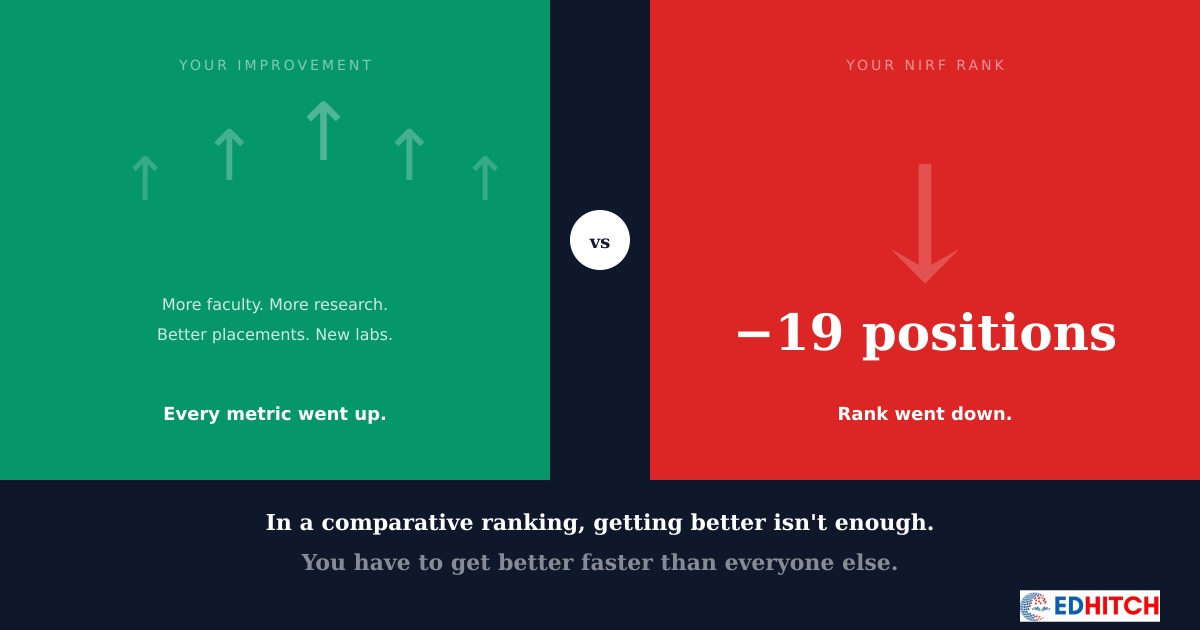

A Dean at a pharmacy college in Rajasthan showed us his institution's progress report. Over two years, they had hired twelve new PhD faculty. Published sixty more papers than the previous cycle. Built a new research lab. Increased their placement rate from 68% to 79%.

Their NIRF rank dropped by nineteen positions.

"We did everything right," he said. "Every metric improved. How did we go backwards?"

He was right — every metric had improved. What he didn't account for was that NIRF doesn't measure improvement. It measures position.

NIRF is not a scorecard. It's a race.

Most institutional leaders think of NIRF like a grading system. Score above a certain threshold, get a certain rank. Improve your score, improve your rank. The logic seems obvious.

But NIRF doesn't work that way. There is no fixed threshold. There is no guaranteed rank for a given score. Your rank is entirely determined by how your numbers compare against every other institution in your category — in that specific year.

If you scored 45 last year and ranked 120th, scoring 48 this year doesn't guarantee a better rank. If the institution that scored 44 last year now scores 50, and the one that scored 43 now scores 49 — you've improved, but you've fallen behind.

It's a race. You can run faster than last year and still lose positions — if others ran faster than you did.

In a comparative ranking, getting better isn't the goal. Getting better faster than everyone around you is.

Why this confuses leadership

When a VC or Principal reviews the institution's performance, they look at internal metrics. Faculty hired: up. Publications: up. Placements: up. Infrastructure: up. Every arrow points in the right direction.

Then the NIRF result arrives. Rank: down.

The natural reaction is disbelief. Then frustration. Then the blame cycle — the IQAC didn't fill the portal correctly, the committee didn't work hard enough, the data wasn't captured properly.

Sometimes the blame is justified. But often, the data was fine. The institution did improve. The problem is that the institution improved in isolation — without knowing what the institutions ranked above them were doing at the same time.

An institution that improves its research output by 20% feels good about itself. But if the five institutions above it improved by 30%, the gap widened — not narrowed. The rank reflects the gap, not the effort.

The three-year averaging trap

NIRF uses a three-year data average for most parameters. This means your score in any given year is influenced by the previous two years of data as well.

An institution that improved dramatically this year might not see the full effect of that improvement in the current NIRF cycle — because the older, weaker years are still pulling the average down.

Conversely, an institution that had a strong year two years ago might see its rank drop now — as that strong year exits the three-year window and is replaced by a more recent, weaker one.

This averaging creates a lag between institutional action and ranking movement. Institutions that don't understand this lag make two mistakes: they take credit for improvements they didn't cause (strong older data lifting the average), and they blame themselves for drops they didn't cause (strong older data exiting the window).

NIRF's three-year average means your rank today is partly a result of decisions made three years ago. And your decisions today won't fully show up for another two years.

The invisible competitor problem

In a grading system, you compete against a standard. In a ranking system, you compete against institutions — and you can't see what they're doing.

You don't know if the institution ranked five positions above you just hired twenty faculty. You don't know if they signed a research partnership that will triple their publication output next year. You don't know if they restructured their financial reporting to better align with NIRF's categories.

You're running a race where you can see your own speed but not the speed of anyone else. The only signal you get is the rank — and by the time you see it, the race for that cycle is already over.

This is why blind improvement — hiring more, publishing more, spending more — doesn't reliably move ranks. It's not about doing more. It's about knowing exactly where the gap is between you and the institutions around you, and directing effort precisely there.

What the institutions that climb actually do

The institutions we've seen make consistent rank movement don't work harder than everyone else. They work differently.

They start by understanding where they stand relative to specific peer institutions — not just their own score, but how their score compares across each dimension of the NIRF framework. They identify the specific areas where the gap with the institutions above them is smallest — because that's where a targeted improvement can flip a rank position.

They also identify areas where they're losing marks not because of genuine weakness, but because of how their data is reported. In our experience across 100+ institutions, this "data translation gap" accounts for a large portion of the rank difference between institutions of similar quality.

And they think in three-year cycles — not one-year sprints. Because they understand the averaging mechanism, they plan improvements that compound over time rather than chasing quick fixes that don't survive the three-year window.

None of this is possible without a diagnostic that reads the institution's position comparatively — not just internally. And that's the piece most institutions are missing.

The institutions that climb don't just ask "how do we improve?" They ask "where exactly are we losing to the institutions ranked above us?" The answer is always specific. And it's always different from what the internal reports suggest.

Know Where You Stand — Relative to Your Peers

Our NIRF Diagnostic is a parameter-wise assessment that reads your position comparatively. We identify exactly where the gap with peer institutions exists, which sub-parameters are closing it, and which are widening it. Not a generic report — an institution-specific comparative analysis.

Learn About the Diagnostic →Frequently Asked Questions

Why did my NIRF rank drop despite improvement?

NIRF is comparative. If you improved by 5% but peers improved by 8%, your rank drops — not because you got worse, but because others got better faster.

How does NIRF comparative ranking work?

NIRF ranks institutions relative to each other — not against a benchmark. Your rank depends on how every other institution in your category performed in the same cycle.

Can rank drop even if score improves?

Yes. If your score improves but institutions around you improve more, your position drops despite better absolute performance.

Why is my rank stuck at the same position?

You're improving at the same pace as your peer group. Moving up requires improving faster than the institutions above you — which requires knowing where they're gaining.

How do institutions actually move up?

By understanding their position relative to specific peers and directing effort where the gap is smallest. Blind improvement without peer context is why most institutions stay stuck.

Related Reading

Edhitch

Accreditation & Ranking Intelligence · NAAC · NBA · NIRF · 12 Years · 100+ Institutions