A few years ago, we started working with a management institution on NBA accreditation.

We did the full engagement. Faculty profiles. Programme outcomes. CO-PO mapping. Placement analysis. Outcome-based education implementation. The institution was completely focused on NBA — and rightly so. That was their immediate goal.

Then the NIRF window opened.

A junior faculty member was asked to "fill up the portal." Nobody connected him with the NBA team. Nobody shared the data that had already been compiled. He started from scratch — pulling numbers independently from departments, calling placement cells, chasing faculty for qualification details.

The data had mistakes. The institution didn't get ranked.

A few months later, the management decided to pursue NAAC accreditation. A third team was formed. They started collecting the same information — again independently, again from the beginning.

Three frameworks. Three teams. Three timelines. The same institution. The same faculty. The same students. But three completely disconnected efforts — each one blind to the work already done for the others.

This isn't an unusual story. This is how the majority of Indian institutions operate. And the problem isn't data. The problem is the absence of an integrated accreditation and ranking strategy.

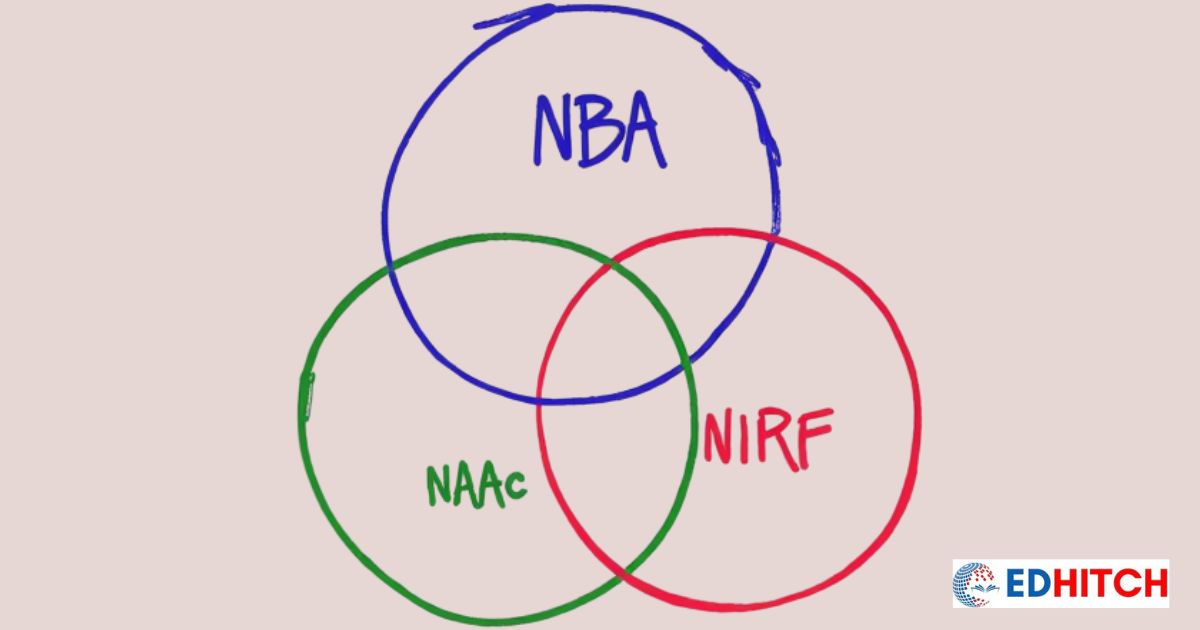

NAAC, NBA and NIRF are not three different things

If you step back and look at what these frameworks actually evaluate, a pattern becomes obvious.

All three care about the quality of your teaching and your teachers. NIRF measures it under Teaching, Learning and Resources (TLR). NAAC evaluates it across Criterion 2 (Teaching-Learning and Evaluation) and Criterion 6 (Governance and Leadership). NBA assesses it through programme-specific faculty and teaching-learning processes in the SAR.

All three care about your research and intellectual output. NIRF has Research and Professional Practice (RP). NAAC covers it under Criterion 3 (Research, Innovations and Extension). NBA evaluates faculty research in the context of each programme.

All three care about what happens to your students after they graduate. NIRF calls it Graduate Outcomes (GO). NAAC covers it under Criterion 5 (Student Support and Progression). NBA tracks it through graduate attributes and outcome attainment.

All three care about whether your institution is inclusive, financially sustainable, and well-governed. NIRF has Outreach and Inclusivity (OI). NAAC covers governance, gender equity, and environmental sustainability under Criteria 6 and 7. NBA looks at institutional support systems.

Even perception — NIRF's fifth parameter — has echoes in NAAC's emphasis on stakeholder feedback and NBA's employer and alumni surveys.

The names change. The formats change. The level of analysis changes — NBA looks at a specific programme, NAAC looks at the whole institution, NIRF compares you against every other institution in the country. But the fundamental question all three are asking is the same: Is this institution delivering quality education, and can it prove it?

Roughly 68% of what these frameworks require overlaps. That's not a data coincidence. It's because they're all measuring the same thing from different angles.

One important difference: the level of analysis

There's a nuance here that matters.

NBA works at the programme level. If you have a B.Tech in Computer Science pursuing NBA accreditation, the NBA SAR — now aligned with the revised NBA SAR 2025 requirements — is about that specific programme: its faculty, its students, its outcomes.

NAAC works at the institution level. The NAAC SSR covers the entire college or university — all programmes, all departments, all data consolidated across the seven NAAC criteria.

NIRF is comparative. It ranks your institution against others using the same five NIRF parameters: TLR, RP, GO, OI and Perception.

So the same faculty member's data gets used three ways: as part of a programme (NBA), as part of the institution (NAAC), and as part of a national comparison (NIRF). Three lenses on the same data — not three different data sets.

Once you understand this, the idea of collecting separately starts to feel absurd.

The real problem: three compliance projects instead of one quality strategy

Here's what happens at most institutions.

NIRF deadline approaches. Emergency mode. The IQAC coordinator or a designated faculty member fills the portal. Data is pulled, sometimes verified, sometimes not. Submitted. Done. Everyone moves on.

Three months later, NAAC SSR preparation starts. Different team. Different mood. Six months of intensive SSR writing. Evidence folders. DVV preparation. Peer team visit rehearsals. It feels like a completely separate project — because it is treated like one.

Meanwhile, the NBA coordinator for engineering or pharmacy is building NBA SAR documentation for two or three programmes. Again independently. Again pulling the same faculty qualifications, the same student outcomes, the same research data — but in NBA's format.

The institution's quality efforts are fragmented into three disconnected compliance exercises. Each one feels urgent. Each one consumes enormous energy. And none of them build on the work done for the others.

This is not a process failure. It's a strategy failure.

The institution doesn't have an integrated quality vision. It has three deadlines.

Why does this keep happening?

Because institutions are too busy working on whatever is due next.

That's the honest answer. It's not that IQAC coordinators don't know the overlap exists. They feel it every time they finish one submission and start the next, thinking "Didn't I just compile this same information two months ago?"

But there's never time to step back. The next deadline is always approaching. The management's attention is on whatever framework is currently active. "This is the time when our focus is NBA, so we'll work only on this. Nothing else. We have no time for anything else."

I've heard this sentence — or something very close to it — at dozens of institutions.

The other problem is structural. The NBA team is often in the engineering department. The IQAC coordinator handles NAAC. NIRF gets assigned to whoever is available. These teams don't share templates, don't share processes, and often don't even share a WhatsApp group.

Without someone at the leadership level who has a vision of seeing all three as one institutional quality system — someone who says "these three are connected, and we will treat them as connected" — nothing changes. IQAC best practices and systems don't establish themselves. Someone has to decide.

What fragmentation actually costs

More than most institutions want to admit.

It costs your best people their time. Senior faculty, IQAC coordinators, NBA coordinators — the most qualified people in your institution — spend weeks every cycle on compilation work. When you run three separate processes, that time triples.

It costs you accuracy. When three different teams compile independently, the numbers diverge. Your NAAC SSR says 145 faculty with PhD. Your NIRF submission says 138. Your AISHE data says 132. Three people extracted from the same HR register differently, with different inclusion criteria, at different points in the year.

This is how NAAC DVV queries happen. NAAC's Data Verification and Validation process cross-checks your claims against NIRF data, against AISHE data. If your NAAC number doesn't match your NIRF number for the same metric — that's a red flag. Not because you fabricated anything, but because your own teams counted differently. And during NAAC peer team visits, experienced assessors notice these inconsistencies immediately.

It costs you institutional memory. When each framework is handled as a one-time project, the knowledge disappears when the team disbands. The next cycle starts from zero.

An integrated strategy doesn't just avoid these costs. It compounds in the other direction. Fix a weakness identified through NIRF diagnosis — your NAAC grade improves. Strengthen a programme for NBA — your NIRF TLR score rises. Build a robust graduate tracking system once — it feeds all three frameworks every year, automatically.

What does an integrated strategy actually look like?

It starts with a shift in thinking. Not "How do we prepare for NAAC?" but "How do we build institutional quality that satisfies NAAC, NBA and NIRF simultaneously?"

That shift changes everything.

Understanding the overlap — actually mapping where NAAC criteria, NBA SAR sections, and NIRF parameters ask for the same thing. Not vaguely knowing they overlap, but seeing it mapped so your IQAC team can use it as a working reference.

Building diagnostic skills — being able to read your own NIRF scorecard and NAAC report like an expert. Understanding which NIRF sub-parameters carry the most weight, which NAAC binary metrics gate entire criteria under the revised NAAC framework, and where your institution is leaving marks on the table.

Knowing the frameworks deeply — what actually moves your NAAC grade? Which 5 NIRF sub-parameters carry 60% of the weight? What are the 5 DVV objection patterns that cause 80% of rejections? These are specific strategic insights that determine whether your preparation efforts pay off.

Creating a unified institutional calendar — not three deadline-driven panics, but a 12-month plan that maps what to do when, across all three frameworks. Same effort, spread evenly, zero emergency.

This direction is exactly where the regulatory environment is heading. The government's One Nation One Data initiative aims to create a single data source for all quality frameworks — so institutions submit once and all agencies pull from the same verified dataset. With NAAC moving to binary accreditation and MBGL levels, the new AI-based assessment already cross-references NIRF and AISHE data automatically. Institutions that integrate now will be ahead of the curve.

At Edhitch, we've spent 12 years helping institutions build exactly this kind of integrated approach. Our advisory practice and diagnostics platform are designed around one principle: your institution's quality is one thing, not three. NAAC, NBA and NIRF are three lenses on the same reality. Treat them that way, and everything gets simpler.

Where should your institution start?

With an honest assessment.

Ask yourself: does your institution treat NAAC, NBA and NIRF as one strategic challenge — or as three separate compliance projects?

The first move isn't buying software or hiring a consultant. It's having one meeting where the IQAC coordinator, the NBA coordinator, and the Principal sit in the same room and ask: "What are we all working on, and where are we duplicating effort?"

That one conversation — when it happens honestly — changes the trajectory.

Once you see the overlap, you can't unsee it. And once leadership decides to treat quality as one integrated system rather than three separate submissions, the entire institution starts moving differently.

Start the conversation. The strategy follows.

Build Your Institution's Integrated NAAC–NBA–NIRF Strategy

Join our workshop — One System. Three Frameworks. — on April 4, 2026.

10 sessions. 7 deliverables. 4 live platform demos. Online via Zoom. 80 seats.

Frequently Asked Questions

What is the overlap between NAAC, NBA and NIRF?

Approximately 68% of what NAAC, NBA and NIRF evaluate overlaps — including teaching quality, research output, graduate outcomes, institutional governance and inclusivity. NBA is programme-level, NAAC is institution-level, NIRF is comparative ranking.

How should institutions prepare for NAAC, NBA and NIRF simultaneously?

By treating them as one integrated institutional quality strategy — mapping overlaps, building diagnostic skills, and maintaining a unified 12-month quality calendar instead of three separate panic cycles.

Can NIRF data be used for NAAC SSR preparation?

Yes. NIRF parameters TLR, RP, GO and OI map directly to NAAC Criteria 2, 3, 5, 6, and 7. Recent NIRF submissions already contain significant NAAC data — it needs mapping, not re-collection.

Why do NAAC DVV queries occur due to NIRF data mismatches?

NAAC's DVV cross-references SSR claims against NIRF and AISHE data. Independent preparation leads to different counts for the same metric — triggering queries not from fabrication but from inconsistent counting.

What is an integrated accreditation and ranking strategy?

One continuous quality improvement process covering all three frameworks — understanding overlaps, building diagnostics, maintaining unified records, and planning on one annual calendar.

What changes with NAAC binary accreditation and MBGL 2025?

NAAC replaces CGPA grading with binary accreditation plus optional MBGL levels (L1-L5). The AI-based assessment cross-references NIRF and AISHE data automatically — making integrated consistency even more critical.

Edhitch

Accreditation & Ranking Intelligence Partner. 12 years. 9,000+ participants. Independent diagnostics for NAAC, NBA and NIRF.