The Principal of an autonomous college in Tamil Nadu spent three months preparing for the NAAC peer team visit. Fresh paint. Landscaped gardens. A new welcome banner across the main building. Cultural programme rehearsed. Every department briefed on what to say.

The peer team arrived. Within the first hour, the chairperson asked for the IQAC minutes from the last two years. The coordinator brought a bound file. The chairperson opened it to a random page, read for thirty seconds, and asked: "This meeting resolved to implement a feedback-based curriculum review. Where's the evidence that it happened?"

Silence. The coordinator didn't have the Action Taken Report ready. It existed — somewhere in the IQAC folder — but not indexed, not cross-referenced to the minutes, and not accessible within the two minutes the peer team was willing to wait.

The visit didn't fail because the institution was bad. It stumbled because the institution prepared for a performance when the peer team came to verify a document.

The peer team is not inspecting you. They are verifying your SSR.

This is the single most important thing to understand about the peer team visit. They are not conducting a surprise inspection. They are not evaluating you from scratch. They arrive having already read your SSR — every metric, every claim, every qualitative narrative. Their job is to verify whether what you wrote is true.

The quantitative metrics — roughly 70% of your CGPA — are already scored. DVV has checked them. The peer team cannot change those scores. What they evaluate is the qualitative 30%: your institutional culture, your quality processes, your governance, your best practices, and whether the lived reality of your campus matches the narrative in your SSR.

This means the peer team arrives with a mental checklist of things your SSR claimed. Every claim is a promise. The visit is a verification of promises.

Institutions that prepare for a performance disappoint. Institutions that prepare for a verification succeed. The peer team has already read your script — they came to check whether it's real.

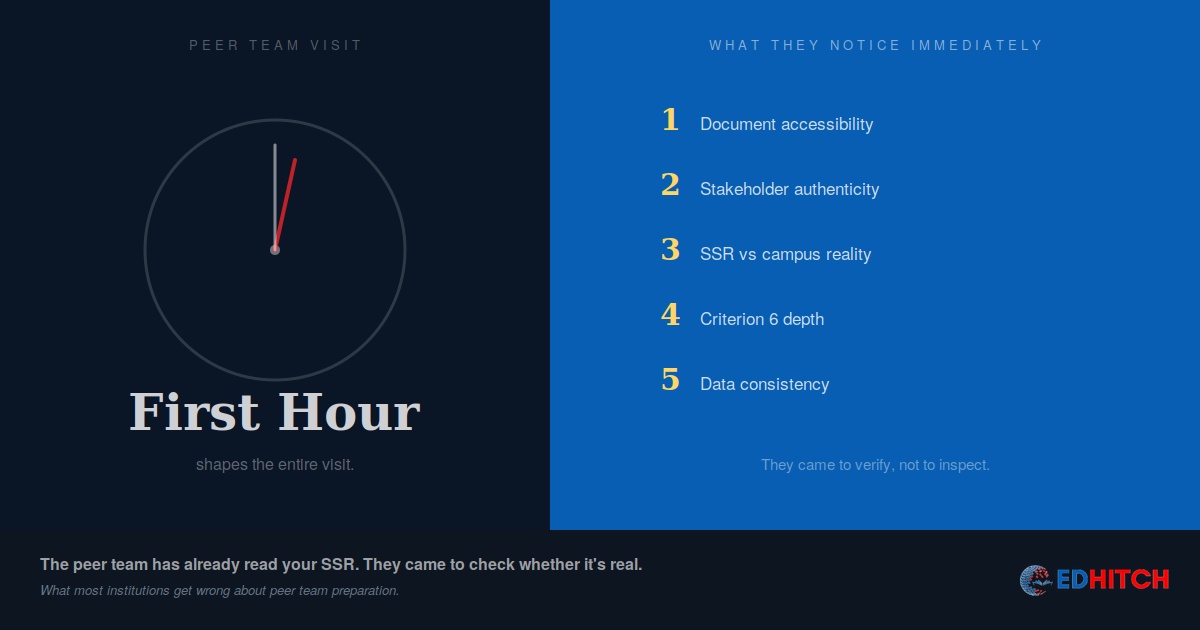

The 5 things they notice in the first hour

1. Document accessibility — can you produce evidence in 2 minutes?

The peer team will ask for specific documents — not in sequence, not criterion-by-criterion, but whatever catches their attention as they read through the SSR. IQAC minutes with ATRs. Board of Governors resolutions. Feedback analysis reports. Extended Profile source documents. Alumni survey results. Financial statements matching the SSR's expenditure claims.

If the IQAC coordinator has to leave the room to find a document, the peer team notices. If someone makes a phone call to a department asking them to "send the file," the peer team notices. If there's a pause longer than two minutes between request and evidence, the peer team begins to wonder what else isn't readily accessible.

Institutions that struggle here aren't disorganised. They're organised by department, not by criterion. The IQAC coordinator knows where everything is — but the peer team doesn't have 30 minutes to learn the coordinator's filing system. The gap between "we have the evidence" and "we can produce the evidence in 90 seconds" is the gap that costs qualitative marks.

2. Stakeholder authenticity — coached answers are instantly detectable

The peer team will meet students, faculty, alumni, and employers — separately, without the Principal present. The most dangerous preparation mistake an institution can make is coaching these groups on what to say.

Peer team members have conducted dozens of visits. They know what coached answers sound like. When every student says "the institution provides excellent infrastructure and placement support" in nearly identical phrasing, the peer team doesn't believe it — they note it as evidence that the institution is managing perception rather than demonstrating quality.

What works is preparing stakeholders to speak honestly and specifically. A student who says "the new computer lab in Block B helped me complete my final-year project because the earlier lab didn't have the software we needed" is more credible than one who says "the infrastructure is excellent." Specificity is the marker of authenticity. Generality is the marker of coaching.

3. The gap between SSR narrative and campus reality

The peer team walks through your campus. They visit departments, labs, library, hostels, and support facilities. They are comparing what they see with what your SSR describes.

If your SSR claims a "state-of-the-art language lab" and the peer team finds a room with 15 desktops running outdated software, that's a gap. If your SSR describes "robust industry partnerships" but no department can name a specific collaboration outcome from the last year, that's a gap. If your SSR highlights "student mentoring programmes" but students in the meeting say they've never had a mentor assigned, that's a gap.

The fix isn't to exaggerate in the SSR. The fix is to write the SSR honestly and then ensure the campus matches what you wrote. An institution that describes modest facilities accurately scores better than one that describes excellent facilities that don't exist.

4. Criterion 6 depth — governance and IQAC functioning

The peer team pays disproportionate attention to Criterion 6 (Governance, Leadership and Management) because it tells them whether quality improvement is systemic or accidental. They check: Does the IQAC function between accreditation cycles, or does it activate only before visits? Do IQAC meeting minutes show a continuous improvement loop? Is there evidence that IQAC recommendations were actually implemented — not just discussed?

An institution with a strong Criterion 6 story — where the peer team can trace IQAC recommendation → institutional action → measurable outcome → next improvement cycle — creates confidence that the institution's quality is sustainable. An institution where Criterion 6 evidence is thin creates doubt about everything else in the SSR, because the peer team starts wondering: "If the quality assurance mechanism is weak, how reliable are the quality claims?"

5. Consistency between data sources

The peer team cross-references. Your SSR says 180 faculty. Your Extended Profile says 175. Your AISHE return says 192. Which number is correct?

Data inconsistencies between the SSR, Extended Profile, AISHE, and NIRF submissions are one of the first things peer teams flag. Cross-portal inconsistencies that survived DVV — perhaps because DVV only checked against the SSR's own documents, not against other submissions — become visible when the peer team asks probing questions and the numbers don't reconcile.

The fix sounds simple — reconcile before the visit. But reconciliation requires knowing which numbers appear in which submissions, which definitions each portal uses, and where your institution's counting methodology differs from each framework's expectations. That reconciliation exercise is institution-specific, and it's one of the first things our diagnostic covers.

The peer team is not trying to catch you. They're trying to verify you. The institutions that do well are the ones that make verification easy — because their data is clean, their evidence is organised, and their campus reality matches their SSR narrative.

The preparation mistake that costs the most marks

Institutions prepare for the visit. They should prepare for the SSR. The visit is downstream — if your SSR is honest, detailed, and supported by accessible evidence, the visit is a verification exercise. If your SSR overpromises, the visit becomes an exposure exercise.

The institutions we work with that score highest on qualitative metrics share one trait: they don't treat the peer team visit as an event. They treat it as a consequence of how they operate year-round. By the time the visit arrives, there's nothing to prepare — because the evidence already exists, the systems already work, and the campus reality already matches the SSR.

The institutions that scramble are the ones that built the SSR first and the evidence second. That ordering is why grades drop.

Whether your institution is in the first category or the second — and what specifically needs to change — is what our diagnostic tells you.

Worried about your peer team visit?

Our NAAC Readiness Diagnostic includes SSR verification readiness, criterion-wise evidence audit, stakeholder preparation review, and a written report identifying exactly what peer teams will flag. Not a generic checklist — an institution-specific assessment.

Learn About the Diagnostic →Frequently Asked Questions

What does the peer team check during their visit?

They verify the qualitative claims in your SSR — roughly 30% of your CGPA. They check institutional culture, quality processes, governance, and whether campus reality matches your SSR narrative.

How long is a peer team visit?

2-3 days for colleges, 3-4 days for universities. The schedule is structured by NAAC and includes meetings with IQAC, leadership, faculty, students, alumni, and facility visits.

Can the visit change my NAAC grade?

Yes. The peer team evaluates qualitative metrics worth ~30% of CGPA. A low qualitative score can drop your CGPA by 0.3-0.5 points — enough to change your grade band.

Should we coach students before the visit?

No. Coached answers are instantly detectable. Prepare students to speak honestly and specifically about their actual experience. Specificity is credible. Rehearsed generalities are not.

What's the most important preparation?

Document accessibility. Every SSR claim should be verifiable within 2 minutes of being asked. Criterion-wise evidence folders, physical and digital, indexed by metric number.

Related Reading

Edhitch

Accreditation & Ranking Intelligence · NAAC · NBA · NIRF · 12 Years · 100+ Institutions