The Vice Chancellor of a well-established university in South India asked us a question that we've now heard from over thirty institutions:

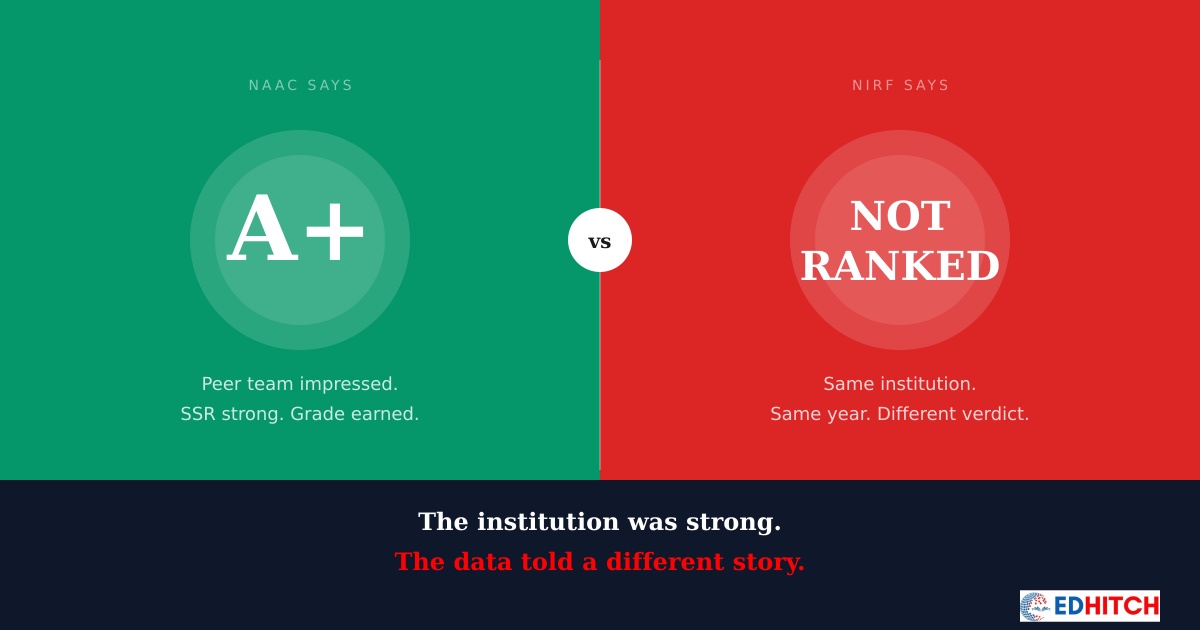

"We got NAAC A+. Our academics are strong. Our faculty is qualified. Our infrastructure is excellent. But we can't get ranked in NIRF. What are we doing wrong?"

He wasn't doing anything wrong. He was doing two things — NAAC and NIRF — as if they were the same thing. They're not.

They care about the same things differently

NAAC and NIRF both care about your faculty. Both care about your research. Both care about what happens to your students after graduation. Both care about whether your institution is inclusive and well-governed.

But they measure these things in completely different ways.

NAAC asks you to describe your quality processes, document your achievements, and present evidence to a peer team that visits your campus. It's qualitative at its core — narrative-driven, process-oriented, and evaluated by human judgment.

NIRF takes numbers from multiple sources — some from your portal submission, some from third-party databases — and runs them through a scoring algorithm. It's quantitative at its core — data-driven, formula-based, and evaluated by computation.

An institution can write an excellent SSR, present beautifully to the peer team, and earn NAAC A+ — while the same institution's data, when processed through NIRF's algorithm, tells a different story.

NAAC rewards how well you tell your story. NIRF rewards how well your numbers perform against every other institution in the country.

The data doesn't travel between frameworks

Here's the deeper problem. At most institutions, the NAAC team and the NIRF team don't talk to each other. They work in separate timelines, with separate data, for separate submissions.

The NAAC SSR is prepared over months — sometimes a year — with dedicated faculty and consultants. The NIRF portal is filled in days, often by a single person pulling numbers from wherever they can find them.

The result? The same institution reports different numbers for the same facts in two different frameworks. Not because anyone is dishonest. Because nobody cross-checked.

Faculty count doesn't match. Research numbers don't match. Financial data is classified differently. Graduate outcomes are counted differently. And increasingly, these mismatches are being noticed — not just by us, but by the frameworks themselves.

NAAC's DVV process now cross-references data from NIRF and AISHE. NIRF pulls publication data from Scopus independently. The government's One Nation One Data push is heading towards a single institutional data repository. The days of maintaining separate stories for separate frameworks are ending.

What strong NAAC institutions get wrong about NIRF

Institutions that score well in NAAC develop a certain confidence — understandably so. They've been through a rigorous process. They've faced a peer team. They've earned their grade.

But that confidence sometimes becomes a blind spot for NIRF. They assume that good academics automatically translate into good NIRF scores. They assume that NAAC A+ means they're doing everything right.

NIRF doesn't know about your NAAC grade. It doesn't read your SSR. It doesn't care about your best practices or your institutional vision. It takes raw numbers, compares them against every other institution in your category, and produces a rank. If your numbers don't perform — regardless of how good your institution actually is — your rank suffers.

We've worked with institutions that are genuinely excellent — great faculty, engaged students, meaningful research — but their NIRF data doesn't reflect any of it. The institution is strong. The data is weak.

An institution's NIRF rank is not a measure of its quality. It's a measure of how well its quality is captured in data — and how that data performs against a specific algorithm.

The cost of treating them as separate projects

When NAAC and NIRF are handled as separate compliance exercises, three things happen.

First, effort is duplicated. The same faculty data is collected twice. The same research data is compiled twice. The same financial data is extracted twice. Different formats, different teams, different timelines — but the same underlying information being processed in parallel with no coordination.

Second, inconsistencies accumulate. When two teams independently count faculty, they arrive at different numbers. When two teams independently classify expenditure, the totals don't match. These inconsistencies don't just cause confusion — they trigger scrutiny from both NAAC DVV and NIRF's data verification processes.

Third, the institution misreads its own position. The NAAC grade creates a sense of accomplishment. The NIRF rank — or lack of one — creates confusion. Leadership doesn't understand how both can be true simultaneously. And without someone who can read both frameworks together, they can't see where the disconnect lies.

Why this gap is growing

Five years ago, NAAC and NIRF operated largely independently. An institution could prepare for each one separately without much consequence.

That's changing rapidly.

NAAC is moving towards binary accreditation with AI-based assessment that will pull data automatically from NIRF and AISHE. NIRF is tightening its data verification and penalising inconsistencies. The government is pushing for unified institutional data reporting.

Institutions that continue to treat NAAC and NIRF as separate projects are building a problem that compounds every year. The data silos they maintain today will become the inconsistencies they're penalised for tomorrow.

What actually needs to happen

The fix isn't working harder on NAAC or working harder on NIRF. The fix is understanding how the two frameworks interact — where they overlap, where they diverge, and where your institution's data behaves differently in each one.

That understanding is institution-specific. It depends on your category, your data history, your reporting patterns, and your organisational structure. Two NAAC A+ institutions can have completely different NIRF problems — because the gap between the frameworks manifests differently at every institution.

Every institution's gap is different. Where the disconnect lies — and how deep it runs — depends entirely on your data, your category, and your reporting history. That's why a cookie-cutter approach doesn't work.

The institutions that perform well in both NAAC and NIRF are not the ones that work twice as hard. They're the ones that work once, with an integrated understanding of how both frameworks read the same underlying institutional reality.

The question isn't "how do we improve our NIRF rank." The question is "why does NIRF see a different institution than NAAC does?" The answer is always in the data — and it's always specific to the institution.

We Diagnose This Exact Gap

Our NIRF Diagnostic reads your institutional data through both the NAAC and NIRF lens — identifying exactly where the disconnect lies and what's causing your rank to underperform your quality.

We also cover integrated NAAC-NBA-NIRF strategy in our 5-Day programme: April 6-10, 2026 · 7-9 PM · Online

Register Now — ₹2,499 →Frequently Asked Questions

Can an institution have NAAC A+ but no NIRF rank?

Yes. NAAC and NIRF use different frameworks, different data sources, and different scoring methods. Performing well in one does not guarantee performance in the other.

What is the difference between NAAC and NIRF?

NAAC assesses quality through documentation and peer review. NIRF ranks institutions comparatively using numerical data and algorithms. They overlap in what they care about but differ in how they measure it.

Does NAAC grade affect NIRF ranking?

Not directly. But inconsistencies between the two can hurt both — especially as cross-referencing between frameworks becomes automated.

Why do institutions treat them as separate projects?

Different formats, timelines, and teams. This creates data silos where the same facts are reported differently — leading to inconsistencies that affect performance in both.

How can institutions perform well in both?

By treating them as one integrated strategy. This requires understanding how the frameworks overlap and diverge — which is an institution-specific exercise.

Edhitch

Accreditation & Ranking Intelligence · NAAC · NBA · NIRF · 12 Years · 100+ Institutions