A university in North India decided to improve its research parameter. The VC set a target: two publications per faculty per year. Every department was told to deliver.

By the end of the year, publication numbers had doubled. The leadership was satisfied. Then the NIRF results came.

Their RP score had barely moved.

What happened? Most of the publications were in low-quality journals that didn't carry the weightage NIRF assigns. Junior faculty without research experience had been pressured to publish — producing papers that met the quantity target but not the quality threshold. The effort was real. The strategy was wrong.

This is the most common pattern we see across institutions trying to improve their NIRF ranking: effort without diagnosis. They hear what worked at another institution, copy the approach, and expect similar results. But every institution is different — different faculty mix, different student demographics, different data quality, different starting point. What works for a 50-year-old autonomous college in Tamil Nadu won't work for a 10-year-old private university in Haryana.

After working with 100+ institutions across 12 years, here's what we've found actually moves NIRF ranks — and what doesn't.

Why most "improvement plans" fail

When institutions decide to improve their ranking, they typically do one of four things: hire a consultant, form a committee, start collecting data frantically, or spend money on infrastructure. None of these are wrong in isolation. But without understanding where marks are actually being lost, all of them are guesses.

The consultant may not study your data at the sub-parameter level. The committee meets monthly but doesn't have decision-making authority. The data collection is rushed and unclean. The infrastructure spending shows up as capital expenditure that NIRF doesn't weight the way you think.

Another institution we know spent ₹48 crore on a new campus wing. State-of-the-art building. Their NIRF rank that year went down. Why? NIRF measures resources per student and how they're utilised — not how much you spent on construction. Once your infrastructure crosses the adequacy threshold, additional spending adds zero marks. That ₹48 crore showed up as capital expenditure and barely moved the FRU sub-parameter.

The fundamental mistake is treating NIRF as a form-filling exercise rather than a strategic exercise. Institutions fill numbers into the portal and hope for the best. They never sit with their own scorecard and ask: "Which specific sub-parameter is costing us the most marks, and what exactly would move it?"

That question is where improvement actually begins.

The diagnostic-first approach: what we actually look at

When we sit down with an institution's NIRF data for the first time, we don't start with recommendations. We start with diagnosis. Here's the sequence:

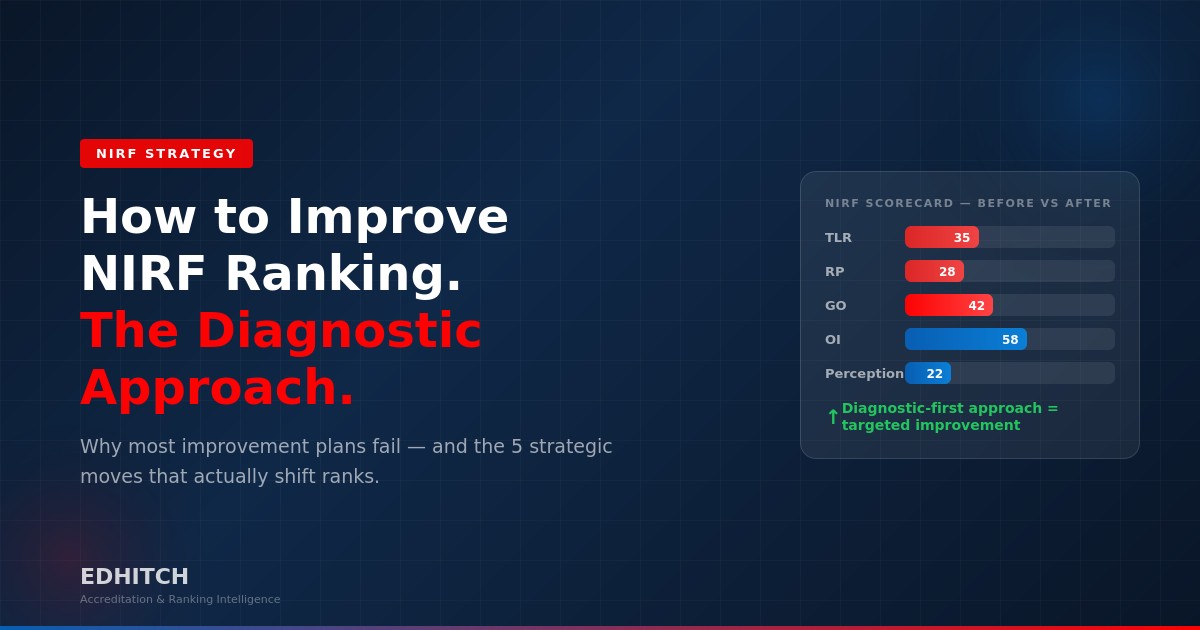

Step 1: Read the scorecard like a doctor reads a report. We look at the five parameter scores — TLR, RP, GO, OI, Perception — and identify which ones are significantly below the category average. If your TLR is 35 out of 100 and the median for your band is 52, that's where the bleeding is. We don't try to improve everything at once.

Step 2: Go one level deeper — sub-parameters. Within the weak parameter, we identify which sub-parameter carries the most weight and where the gap is largest. For example, within TLR, if faculty count is inflated with 30 ineligible names, every ratio depending on faculty count is distorted. We've seen this single issue swing TLR scores by 15-20 marks.

Step 3: Compare submitted data against reality. We pull the institution's actual faculty records, publication lists, financial statements, and graduate outcome data — then compare it against what was submitted in the NIRF portal. The gap between reality and submission is where the quick wins are. Often, the institution is better than what they reported — they just reported it wrong.

Step 4: Benchmark against peers. We compare the institution's sub-parameter scores against 5-10 institutions in the same NIRF band and category. This reveals which improvements would move them into the next band versus which improvements would keep them where they are. Not all improvements are equal — some cross thresholds, others don't.

Step 5: Prioritise by impact-to-effort ratio. Some fixes take 2 hours (correcting Scopus affiliation). Some take 2 years (building a doctoral programme). We map every improvement opportunity on an impact-effort matrix and build the roadmap accordingly. The IQAC team gets a clear answer: "Do this first. Then this. Then this."

This diagnostic approach — not a generic "10 tips to improve NIRF" list — is why institutions move after working with us. The strategy is specific to their data, their gaps, their institution.

Five strategic moves that actually shift NIRF ranks

These are not generic tips. These are real interventions we've implemented at institutions that produced measurable rank movement.

1. Clean your faculty count against NIRF eligibility criteria

This is the single highest-impact fix at most institutions. NIRF requires regular, full-time faculty who meet specific criteria: minimum qualifications, taught both semesters, not on extended leave, not counted at another institution. Most institutions submit their entire register — including retirees, deputations, visiting faculty, and people who joined mid-year.

At one medical university, we found 30 of 247 listed faculty were marked "not currently working" — and 32 more were designated as "Other" (tutors, demonstrators) who may not count as regular faculty. That's potentially 62 names that shouldn't be in the NIRF faculty count. Since faculty count is the denominator in student-faculty ratio, publications per faculty, and expenditure per faculty — every ratio shifts when this is corrected.

The fix takes one week of HR audit. The impact on the scorecard is immediate.

2. Audit and merge your Scopus affiliation profile

If your faculty published under "ABC College," "ABC College of Engineering," "ABC College of Engg., Autonomous," and "ABC Engineering College" — Scopus treats these as four different institutions. NIRF matches against one affiliation ID. We've seen institutions lose 30-40% of their publication credit because of this.

A university in Madhya Pradesh had over 180 publications but NIRF was counting fewer than 120. The reason: four different spellings on Scopus. After we helped them merge affiliations and standardise the name, their RP score improved significantly in the next cycle — without a single new publication.

The fix: write to Scopus requesting affiliation merge. It takes 4-6 weeks to process. Start now.

3. Reclassify your financial expenditure with NIRF categories in mind

Your accounts department classifies expenditure for audit and compliance. NIRF classifies it for ranking. These are not the same. If ₹1.2 crore of library spending is filed under "capital expenditure" instead of "recurring academic expenditure," your ESCS (Expenditure excluding Salary) and FRU scores don't see it.

At one institution, we found ₹10.9 crore classified under "Engineering Workshops" — at a medical university that has no engineering department. This was clearly hospital/clinical equipment that was miscategorised. Correct classification would have directly improved their TLR financial sub-parameter.

The fix: one meeting with your accounts officer. Review how library, lab, IT, and research spending is categorised. Align it with NIRF's expenditure definitions. Zero cost, potentially 8-12 marks recovered in TLR.

4. Track complete graduate outcomes — not just placements

NIRF's Graduate Outcomes parameter measures placements, higher education progression, median salary, and entrepreneurship. Most institutions only track placements through their Training & Placement cell. The students who went to M.Tech, MBA, PhD, civil services, or started businesses? Unreported.

At one medical university, 161 students graduated from the 5-year programme in 2023-24. The NIRF data showed only 1 outcome reported. Medical graduates almost always enter residency, practice, or super-specialty programmes — the actual outcome rate is likely 90%+. The entire GO parameter was being calculated on near-zero data because nobody was tracking outcomes beyond the placement cell's scope.

The fix: a simple follow-up system — even a Google Form sent to graduating students and alumni — capturing employment, higher education, and self-employment data. One afternoon to set up. Fills a gap most institutions don't know they have.

5. Focus research investment on quality, not quantity

The North India university that mandated two publications per faculty learned this the hard way. Fifty low-quality papers in predatory journals don't move RP the way fifteen well-placed papers in Scopus-indexed, high-impact journals do.

The institution that moved from rank 180-200 to inside the top 100 did something different: instead of asking all faculty to publish, they identified their top 15 research-active faculty and supported them — reduced teaching load, funded conference attendance, provided research assistants. They also created a structure where undergraduate final-year projects were co-authored with faculty, turning routine student work into publishable output.

The strategy isn't "publish more." It's "publish better, with fewer people, and ensure every paper is correctly attributed."

What the Vice Chancellor must do vs what the IQAC can handle

NIRF improvement fails when it's treated as an IQAC-only responsibility. The IQAC coordinator can clean data and fill the portal. But the decisions that actually move ranks come from the Vice Chancellor or Principal's office.

VC-level decisions (IQAC cannot make these alone):

Research strategy: Deciding whether to mandate publication quantity or invest in quality. Allocating research budgets. Approving faculty incentives for high-impact publications. Creating doctoral programmes.

Faculty hiring: Prioritising PhD-qualified, research-active faculty in recruitment. Ensuring HR maintains NIRF-eligible records — not just payroll records.

Financial classification: Directing the accounts department to classify expenditure with NIRF parameters in mind — not just audit compliance. This is a policy decision only the VC can mandate.

Institutional data governance: Establishing that all departments — HR, accounts, placement cell, research cell, examination — feed data into a unified system rather than maintaining separate records that contradict each other.

Vision communication: The VC must clearly communicate where the institution stands, where it needs to reach, and what the improvement strategy is. If the IQAC team doesn't know the VC's vision, they can't execute it. "Know yourself and your standing in the mirror" — as one VC we work with puts it — is the starting point.

IQAC-level execution (with VC support):

Data cleaning and validation. Portal submission. Coordination across departments. Evidence chain preparation for NAAC DVV. Monitoring the 12-month quality calendar. Tracking graduate outcomes. Maintaining faculty records in NIRF-eligible format.

The line is clear: the VC sets the strategy, the IQAC executes it. When both are aligned, ranks move. When the IQAC works in isolation, they're filling forms without institutional backing — and the results reflect that.

Realistic timelines — not motivational fluff

Here's the honest answer on how long improvement takes:

If your problem is data accuracy (misrepresentation, miscounting, misclassification): One cycle. Clean the faculty list, fix Scopus attribution, reclassify financial data, capture graduate outcomes. These are fixable in 1-3 months, and the improved data will reflect in the next NIRF submission. We've seen institutions gain 15-30 ranks from data correction alone.

If your problem is genuine institutional gaps (low research output, poor faculty qualifications, weak student outcomes): Three to five years. NIRF uses a 3-year data average for most parameters. Even if you hire 20 PhD faculty today, the impact takes 2-3 cycles to fully show because the earlier years' weaker data dilutes the improvement.

The practical approach: Do both simultaneously. Fix data accuracy issues immediately for quick wins in the next cycle. Build a 3-year improvement roadmap for structural changes. The data fixes buy you rank improvement that buys you credibility that buys you time to make the deeper institutional changes.

Most institutions that stagnate are doing neither — they're not fixing data accuracy because they don't know it's wrong, and they're not building long-term strategy because they're too busy firefighting submission deadlines.

The one thing most institutions never do

After 12 years and 100+ institutions, here's the single practice that separates institutions that improve from those that stay stuck: they build a system, not a one-time effort.

Institutions that improve their NIRF rank consistently are not the ones that formed a committee before the deadline. They're the ones where quality processes run continuously — through systems, not through people's memory.

Feedback collection is automated, not manual once a year. Faculty records are updated monthly, not scrambled together in January. Graduate outcomes are tracked from convocation day, not reconstructed from memory during submission week. Financial data is classified correctly at the time of entry, not reclassified during NIRF season.

The institutions that are stuck cycle after cycle are doing the same thing every year: panic, collect, submit, wait, be disappointed, repeat. The institutions that improve are the ones that built a system where data flows continuously, gets validated regularly, and feeds all three frameworks — NIRF, NAAC, and NBA — simultaneously.

That's not a technology problem. It's a discipline problem. And it starts with a diagnostic that shows you exactly where you stand — so you can build the system around your specific institutional reality, not someone else's.

Start with the diagnosis. Build the system. The rank follows.

Get Your Institution's NIRF Diagnostic

Join our Full-Day Programme — Integrated Strategies for NBA · NAAC · NIRF — on April 4, 2026.

Session 2 is a live NIRF scorecard diagnostic. Session 5 decodes every sub-parameter with improvement levers. You'll walk away with a self-diagnostic worksheet and a 12-month improvement calendar.

Frequently Asked Questions

How long does it take to improve NIRF ranking?

If the issue is data accuracy (wrong faculty counts, missing outcomes, misclassified expenditure), improvements can show in one cycle. For genuine institutional gaps, expect a 3-year strategy since NIRF uses a 3-year data average.

What is the first step to improve NIRF rank?

Start with a diagnostic — analyse your scorecard at the sub-parameter level to find where marks are actually being lost. Most institutions find that 60-70% of their gap comes from data accuracy issues, not institutional weaknesses.

Can NIRF rank improve without spending more money?

Yes. Most improvements come from data accuracy — cleaning faculty counts, correcting Scopus affiliations, properly classifying financial data, and capturing complete graduate outcomes. These are low-cost or zero-cost fixes.

Why do institutions fail to improve NIRF ranking?

The most common mistake is copying what other institutions did without diagnosing their own specific gaps. Every institution is different. Without a diagnostic, improvement efforts are misdirected.

What role should the Vice Chancellor play in NIRF improvement?

The VC sets the strategic vision — research strategy, faculty hiring priorities, accounts classification policy, institutional data governance. The IQAC executes but cannot make these structural decisions alone.

What is the most impactful habit for NIRF improvement?

Build a system, not a one-time effort. Automate feedback collection, update faculty records monthly, track graduate outcomes from convocation day, classify financial data correctly at entry. Continuous processes beat annual panic.

How does NIRF connect to NAAC and NBA?

68% of what NIRF evaluates overlaps with NAAC and NBA. An integrated strategy that treats them as one quality system is more efficient and produces better results than three separate compliance projects.

Edhitch

Accreditation & Ranking Intelligence. 12 years. 9,000+ participants. Integrated strategies for NAAC, NBA and NIRF.